Below is the letter PCSP, NYCLU and AI for Families sent yesterday to the NYC Department of Health, in response to their latest letter dated Dec. 18, 2024.

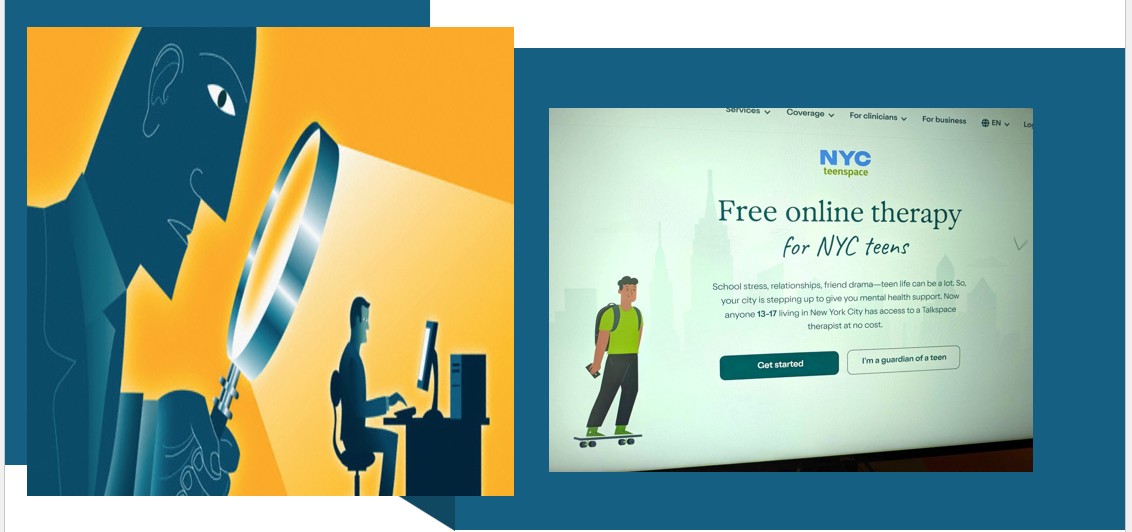

We were happy to learn that the Department of Health and Mental Health (DOHMH) is now requiring Talkspace to rewrite their contract providing online mental health services for NYC teens, as well as their Privacy Policy and Terms of Service to be more privacy protective, in response to the concerns we expressed on September 10 and October 17, 2024.

Yet we find the DOHMH claim in their latest missive that the Talkspace/Teenspace website has now eliminated the use of invasive ad trackers, cookies and personal information disclosures to social media platforms to be wholly inaccurate.

In this follow-up letter we discuss our findings and continuing concerns. We urge DOHMH to make more effective efforts to protect the privacy of NYC teens, including requiring Talkspace to build an entirely new website dedicated to NYC Teenspace services, free of trackers and disclosures, and that it undergo a privacy audit before making it live.

Talkspace should also be required to delete all the personal information already illegitimately collected and shared of NYC children, and make an apology and recompense to those families whose privacy was violated. We also ask why if Talkspace has violated its original contract as DOHMH has implied, whether they will be fined or suffer any penalty.

NYCLU PCSP AIF Reply to DOHMH re persistent privacy issues w. Teenspace 1.8.2025